The world of audio content creation is experiencing a seismic shift. From YouTube videos and podcasts to audiobooks and video games, the demand for high-quality voice content has never been greater. Yet traditional voice acting and dubbing come with significant challenges: expensive studio time, scheduling complexities with professional voice actors, costly re-recording when scripts change, and near-impossible scalability for multilingual content. Artificial intelligence has emerged as a transformative solution, making professional-quality Voice Acting & Dubbing accessible, affordable, and remarkably versatile.

Modern AI voice tools don’t just produce robotic text-to-speech anymore—they generate emotionally nuanced performances with natural inflection, breathing patterns, and character-specific qualities. Whether you’re creating content for entertainment, education, marketing, or accessibility, understanding and leveraging Voice Acting & Dubbing AI tools is essential for staying competitive in today’s content-driven landscape.

In this comprehensive guide, we’ll explore the most powerful AI tools revolutionizing voice production and provide practical strategies for implementing them to create compelling audio content that engages audiences while dramatically reducing production time and costs.

Why AI-Powered Voice Acting & Dubbing Matters

Before exploring specific tools, understanding the transformative business and creative impact of AI-powered Voice Acting & Dubbing provides crucial context for why content creators and businesses are rapidly adopting these technologies.

Traditional voice production presents numerous challenges. Professional voice actors charge $100-500+ per hour, with projects often requiring multiple recording sessions. Studio rental adds $50-200 per hour to costs. When scripts change—which happens frequently—entire segments require re-recording at full cost. Multilingual content multiplies these expenses across each language, often making global content distribution economically unfeasible for smaller creators.

AI-powered Voice Acting & Dubbing eliminates most of these barriers. Generate voice content instantly without booking studios or coordinating schedules. Revise scripts and regenerate audio in minutes rather than days. Create multilingual versions maintaining voice consistency across languages. Scale content production from dozens to thousands of hours without proportional cost increases.

The business impact extends beyond cost savings. Content creators report transformative advantages: 70-90% reduction in voice production costs compared to traditional methods, 80-95% faster turnaround from script to finished audio, unlimited revision flexibility encouraging creative experimentation, global content accessibility through affordable multilingual production, and consistent voice quality across unlimited content volume.

For accessibility advocates, AI voice tools democratize content creation, enabling independent creators, educators, and small businesses to produce professional audio content previously accessible only to well-funded organizations. This democratization is expanding the diversity of voices and stories reaching audiences worldwide.

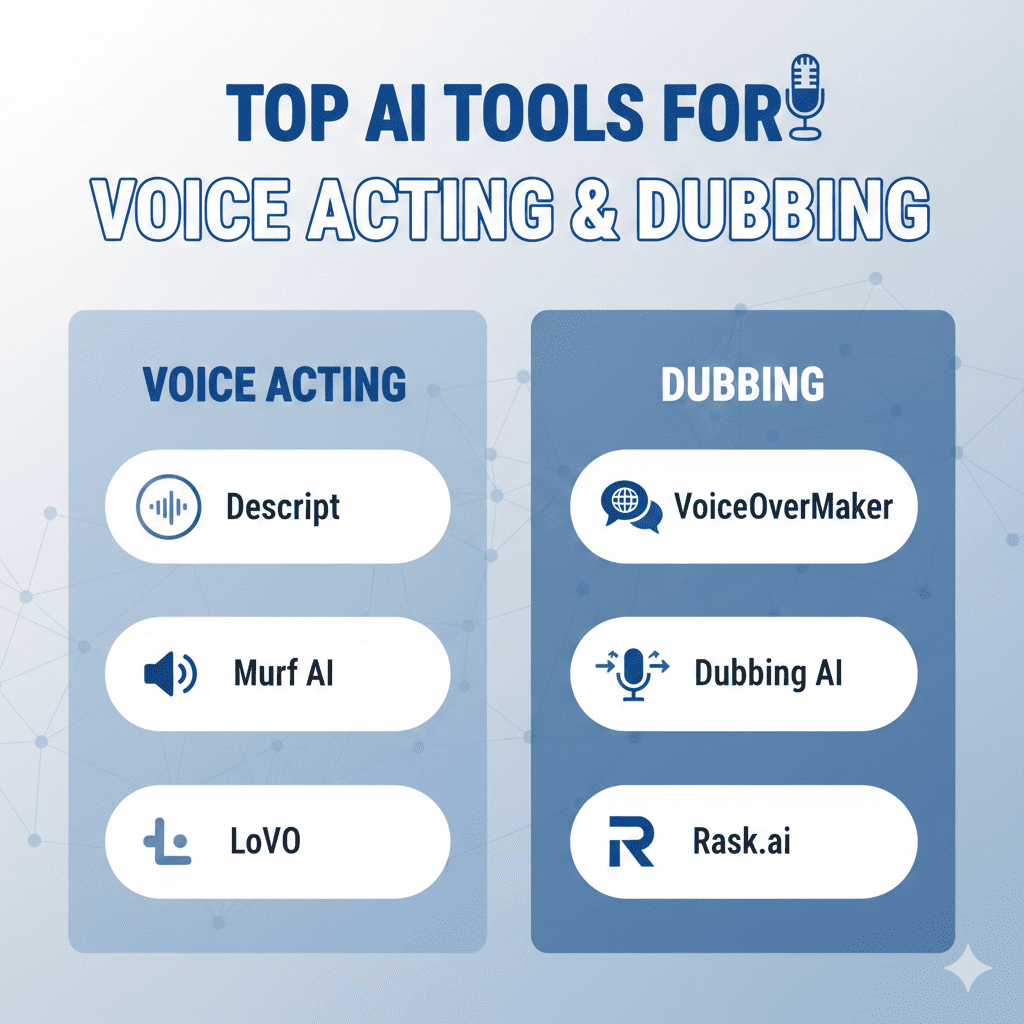

1. Professional Text-to-Speech Platforms

Advanced TTS platforms deliver near-human voice quality suitable for professional content production across entertainment, education, and business applications.

ElevenLabs

ElevenLabs has rapidly become the gold standard for AI voice generation, producing remarkably natural-sounding speech with emotional nuance and contextual understanding. The platform’s deep learning models capture subtle vocal characteristics—breathing patterns, micro-pauses, emotional inflections—that create genuinely believable performances.

For Voice Acting & Dubbing requiring maximum realism, ElevenLabs’ voice cloning capabilities create custom voices from as little as one minute of sample audio. Content creators can clone their own voices for consistent narration across unlimited content, or license professional voices for specific projects.

The multilingual capabilities support content creation in 29+ languages with natural accent handling and cultural pronunciation accuracy. A single voice model maintains consistent character across languages, essential for international content dubbing.

ElevenLabs’ Projects feature enables long-form content production with chapter organization, multiple voice casting, and collaborative editing. This workflow supports professional audiobook production, podcast creation, and video game dialogue implementation.

The emotional control features allow specification of tone, pace, and emotional quality—cheerful, serious, dramatic, whispered—enabling nuanced performances that match content mood and context. This directional control brings AI voices closer to human actor flexibility.

Descript Overdub

Descript revolutionized audio and video editing, and its Overdub feature brings AI voice generation directly into professional editing workflows. The platform creates custom voice models from your recordings, enabling text-based voice editing where typed corrections automatically generate matching audio.

For Voice Acting & Dubbing within video production, Overdub’s integration with Descript’s editing environment creates seamless workflows. Record rough narration, transcribe automatically, edit text to refine scripts, and generate new audio for changes—all without leaving the application.

The voice consistency proves remarkable. Overdub maintains vocal characteristics across generated segments, making AI additions indistinguishable from original recordings. This consistency enables fixing mistakes, updating information, and extending content without re-recording.

Descript’s collaboration features enable team-based voice production where multiple team members contribute narration using consistent voice models. This capability proves essential for distributed teams producing cohesive content.

Murf AI

Murf AI delivers studio-quality voices specifically designed for professional content creation including videos, presentations, podcasts, and audiobooks. The platform’s curated voice library features diverse options across genders, ages, accents, and tones.

The intuitive editor provides precise control over pacing, pitch, emphasis, and pauses through simple text markup and visual controls. For Voice Acting & Dubbing requiring specific delivery styles, these controls enable directing AI performances similar to working with human voice actors.

Murf’s voice customization creates unique voices by blending characteristics from multiple base voices. This capability enables brand-specific voice development that differentiates content while avoiding generic AI voice associations.

The collaboration workspace enables team projects where multiple users contribute voice content, assign different voices to characters, and provide feedback within the platform. This integrated workflow streamlines professional voice production.

Play.ht

Play.ht combines extensive voice library with advanced customization capabilities and developer-friendly API access. The platform offers 900+ voices across 142 languages, providing unmatched diversity for global content creation.

The voice cloning technology creates custom voices from audio samples, with instant cloning from shorter samples or ultra-realistic cloning from extended training audio. For Voice Acting & Dubbing requiring specific voice characteristics, this flexibility enables precise voice matching.

Play.ht’s pronunciation library handles brand names, technical terminology, and non-standard words accurately. Users can specify phonetic pronunciations that the AI consistently applies, essential for specialized content with industry-specific vocabulary.

The API access enables integration with content management systems, video platforms, and custom applications. This programmatic access supports automated voice generation at scale for organizations producing high volumes of voiced content.

2. AI Dubbing and Localization Platforms

Translating content into multiple languages traditionally required recording new voice tracks for each language. AI dubbing tools automate this process while maintaining voice consistency.

Papercup

Papercup specializes in AI dubbing for video content, combining machine translation with AI voice synthesis and human quality control. The platform translates video content and generates dubbed audio that maintains timing synchronization with original videos.

For Voice Acting & Dubbing requiring professional localization, Papercup’s hybrid approach balances AI efficiency with human oversight. Linguists review translations and voice performances, ensuring cultural appropriateness and natural delivery.

The voice matching capabilities create target-language voices that maintain similar characteristics to original speakers, preserving personality and tone across languages. This consistency maintains content feel even as language changes.

Papercup’s enterprise features support large-scale content localization for media companies, educational platforms, and corporate training programs requiring multilingual versions of extensive video libraries.

Deepdub

Deepdub delivers end-to-end AI dubbing specifically designed for entertainment content including films, television series, and documentaries. The platform’s sophisticated lip-sync technology adjusts audio timing to match on-screen mouth movements naturally.

The emotional AI analyzes original performances and replicates emotional qualities in target languages. For Voice Acting & Dubbing requiring dramatic performances, this emotional transfer maintains performance intensity across languages.

Deepdub’s voice casting assigns appropriate AI voices to different characters, maintaining distinct personality for each speaker throughout content. The platform handles complex scenes with multiple overlapping speakers while preserving clarity and character distinction.

The quality assurance workflow includes human verification at critical points, ensuring output meets professional standards for theatrical and broadcast distribution.

Speechify Dubbing Studio

Speechify, known for text-to-speech reading applications, has expanded into dubbing with enterprise-focused translation and voice generation. The platform supports rapid localization of educational content, marketing videos, and training materials.

For Voice Acting & Dubbing requiring fast turnaround, Speechify’s automated pipeline processes videos from upload through translated, dubbed versions with minimal manual intervention. This efficiency enables organizations to maintain content currency across multiple languages simultaneously.

The voice consistency features ensure brand voice remains recognizable across languages, important for maintaining brand identity in international markets.

3. Voice Cloning and Custom Voice Platforms

Creating unique, ownable voices enables distinctive audio branding and personalized content experiences.

Resemble AI

Resemble AI specializes in high-quality voice cloning with emotional control, making it popular for character voices in games, virtual assistants, and branded content. The platform creates custom voice models from audio samples that capture unique vocal characteristics.

For Voice Acting & Dubbing requiring character consistency, Resemble’s emotional granularity enables directing performances with specified emotions—happy, sad, angry, excited—while maintaining character voice integrity. This control enables dynamic performances without re-recording with human actors.

The real-time voice synthesis enables interactive applications where AI voices respond to user inputs immediately. This capability proves essential for conversational AI, virtual characters in games, and interactive storytelling.

Resemble’s watermarking technology embeds detectable signatures in generated audio, providing authentication and provenance tracking important for combating deepfake misuse.

Respeecher

Respeecher delivers Hollywood-quality voice synthesis used in major film productions for de-aging voices, replacing dialogue, and creating synthetic performances. The platform’s sophisticated models capture extremely subtle vocal characteristics.

The voice matching capabilities recreate specific actors’ voices for situations where re-recording is impractical—archive footage, posthumous performances, or logistical constraints. For Voice Acting & Dubbing in professional entertainment, Respeecher’s quality meets theatrical standards.

The emotion and style transfer enables directing synthetic performances with fine-grained control over delivery nuances. Voice directors specify desired performance qualities and receive results matching creative intent.

Respeecher’s ethical framework includes verification systems ensuring proper authorization for voice usage, addressing concerns about unauthorized voice cloning.

Veritone Voice

Veritone Voice provides enterprise voice AI including custom voice creation, voice licensing marketplace, and broadcast-quality synthesis. The platform serves media companies, broadcasters, and enterprises requiring brand-specific voices.

For Voice Acting & Dubbing at enterprise scale, Veritone’s infrastructure handles high-volume voice generation with reliability and consistency required for mission-critical applications like news production and automated content creation.

The voice licensing marketplace connects voice actors with organizations seeking AI voice models, creating economic opportunities for actors while providing businesses access to diverse, licensed voices.

4. AI Voice Acting for Gaming and Interactive Media

Video games and interactive experiences require massive volumes of dialogue with branching possibilities. AI voice tools make previously impractical voice implementations feasible.

Sonantic (Acquired by Spotify)

Sonantic, now part of Spotify, pioneered emotionally intelligent AI voices specifically for gaming and interactive entertainment. The platform generates performances with subtle emotional nuances responsive to narrative context.

For Voice Acting & Dubbing in games, Sonantic’s dynamic voice generation creates dialogue variations based on game state, player choices, and character relationships. This reactivity creates more immersive experiences than traditional pre-recorded dialogue allows.

The emotion vectors enable real-time emotional adjustment, so character voices reflect current emotional states—stress during combat, relief after victory, sadness during tragic moments—without requiring separate recordings for every emotional variation.

Replica Studios

Replica Studios focuses specifically on AI voice for games, virtual reality, and metaverse applications. The platform’s voice library features performance-ready character voices across diverse types—heroes, villains, narrators, NPCs.

The ethical voice library includes only voices where actors have explicitly consented to AI usage, addressing industry concerns about actor rights and fair compensation. For Voice Acting & Dubbing in professional game development, this ethical foundation provides important legal protections.

Replica’s Unity and Unreal Engine integrations enable direct implementation of AI voices into game development workflows. Developers prototype dialogue quickly, test narrative branches, and iterate based on playtesting without expensive recording sessions.

The dialogue system handles context-aware delivery where the same line is performed differently based on preceding events, character relationships, or emotional context. This sophisticated reactivity creates more believable character performances.

Inworld AI

Inworld AI delivers AI characters with integrated voice, animation, and behavioral AI for games and virtual worlds. The platform creates fully realized characters that speak dynamically in response to player interactions.

For Voice Acting & Dubbing in procedurally generated content and emergent gameplay, Inworld enables characters to discuss topics and respond to situations developers haven’t specifically scripted. This generative capability creates unprecedented interaction depth.

The character consistency maintains personality, speech patterns, and emotional characteristics across unlimited dialogue, ensuring characters feel coherent regardless of conversation topics.

5. Audiobook Narration Tools

Audiobook production traditionally required days of studio recording with professional narrators. AI tools have transformed production economics and accessibility.

Speechify Audiobook Creator

Speechify enables authors to convert written books into audiobooks using AI narration. The platform provides natural-sounding voices suitable for various genres from fiction to non-fiction.

For Voice Acting & Dubbing in publishing, Speechify’s affordability makes audiobook creation viable for independent authors who couldn’t afford traditional narration costs. This democratization is expanding audiobook availability.

The chapter organization and voice selection enable appropriate narrator choices for different book sections—different voices for different characters in fiction, or consistent professional narration for non-fiction content.

Beyondwords

Beyondwords specializes in automated audio content creation for publishers, converting articles, blog posts, and books into audio format. The platform’s natural voices deliver engaging narration appropriate for news, educational content, and literary works.

The voice selection includes options optimized for different content types—authoritative for news, warm and engaging for lifestyle content, clear and measured for educational material. For Voice Acting & Dubbing across diverse content, this variety ensures appropriate tone matching.

Beyondwords’ CMS integrations automate audio generation when new written content publishes, creating audio versions without manual intervention. This automation enables publishers to offer audio content across entire catalogs.

Audiary

Audiary delivers high-quality audiobook production specifically designed for authors and publishers. The platform’s AI narrators are trained on audiobook narration, understanding genre conventions and pacing appropriate for sustained listening.

For Voice Acting & Dubbing in literary content, Audiary’s natural reading rhythm and appropriate pacing create comfortable listening experiences for full-length books. The AI handles dialogue attribution, narrator intrusions, and chapter transitions appropriately.

6. Podcast and Content Creation Tools

Podcasters and content creators need efficient voice production for regular content schedules. AI tools enable consistent output without constant recording sessions.

Descript

Beyond Overdub, Descript’s comprehensive audio and video editing capabilities make it essential for podcast production. The Studio Sound feature uses AI to improve audio quality, removing background noise and optimizing voice clarity.

For Voice Acting & Dubbing in podcast production, Descript’s transcription-based editing enables precise control while maintaining workflow speed. Edit audio by editing text, with changes reflected in audio automatically.

The filler word removal automatically detects and removes “ums,” “ahs,” and other speech disfluencies, cleaning up recordings without tedious manual editing. This automation accelerates post-production substantially.

Adobe Podcast

Adobe Podcast (formerly Project Shasta) applies AI to podcast audio enhancement and editing. The Speech Enhancement feature transforms poor-quality recordings into studio-quality audio using AI audio processing.

The automatic transcription and smart editing tools accelerate podcast production workflows. For Voice Acting & Dubbing requiring professional polish, Adobe Podcast’s AI enhancements rescue recordings made in suboptimal conditions.

The Remix feature adjusts audio length to match target durations, compressing or expanding content while maintaining natural speech patterns. This capability simplifies fitting content into specific time slots.

Riverside.fm

Riverside.fm delivers remote podcast and video recording with AI-powered audio enhancement and transcription. The platform captures high-quality local recordings from each participant, avoiding internet quality degradation.

The Magic Audio features use AI to balance levels, reduce noise, and enhance clarity automatically. For Voice Acting & Dubbing involving remote collaborators, Riverside ensures consistent quality regardless of participants’ recording environments.

7. Video Content Dubbing and Voiceover

Video content requires synchronized voice that matches timing and maintains engagement. AI tools streamline video voiceover production.

Synthesia

Synthesia creates AI video with synchronized lip-sync and natural voice, enabling video content creation without cameras or studios. The platform’s AI avatars deliver scripted content with appropriate voice and facial animations.

For Voice Acting & Dubbing in corporate training, educational content, and marketing videos, Synthesia’s automation enables rapid content production and easy updating when information changes. The AI avatars speak naturally while maintaining eye contact and appropriate gestures.

The multilingual capabilities create translated video versions where avatars speak different languages with synchronized lip movements. This localization capability makes global content distribution dramatically more affordable.

HeyGen

HeyGen delivers AI video generation and dubbing with remarkably natural results. The platform translates and dubs existing videos into multiple languages while maintaining lip-sync with original footage.

The voice cloning creates target-language voices matching original speakers’ characteristics, preserving personality across translations. For Voice Acting & Dubbing in marketing and educational videos, this consistency maintains brand voice and presenter identity internationally.

HeyGen’s avatar customization creates branded presenters for consistent video content across organizations. The AI avatars deliver scripts naturally while maintaining on-brand appearance and delivery style.

Rask AI

Rask AI specializes in AI video localization combining translation, voice cloning, and lip-sync for seamless dubbing. The platform handles complex videos with multiple speakers, maintaining distinct voices for each person across languages.

For Voice Acting & Dubbing requiring professional localization quality, Rask’s attention to cultural nuances and idiomatic expressions produces translations that feel natural rather than literally translated.

The voice preservation maintains speaker characteristics across languages so presenters remain recognizable even as language changes, important for personality-driven content.

8. Real-Time Voice Transformation

Live streaming, broadcasting, and real-time communication benefit from AI voice transformation that works instantaneously.

Voicemod

Voicemod provides real-time voice changing for gaming, streaming, and content creation. While originally focused on creative voice effects, the platform now includes realistic voice synthesis and transformation.

For Voice Acting & Dubbing in live content, Voicemod enables creators to perform different character voices in real-time during streaming or recording. This capability supports character-based content creation without separate voice actors.

The soundboard integration adds voice effects, background audio, and sound effects on-demand during live performances, enabling dynamic audio production.

Respeecher Real-Time

Respeecher’s real-time capabilities enable live voice transformation during streaming, broadcasting, or interactive experiences. The technology transforms voices with minimal latency suitable for conversational interactions.

For Voice Acting & Dubbing requiring immediate response, real-time synthesis enables interactive applications like virtual assistants, conversational characters, and live performance augmentation.

Modulate

Modulate delivers real-time voice transformation specifically designed for gaming and social platforms. The technology changes voices while maintaining emotional expression and conversational naturalness.

The safety features include toxicity detection and voice authentication, addressing concerns about voice disguise enabling harassment. For Voice Acting & Dubbing in social applications, these safeguards support positive community experiences.

9. Accessibility and Assistive Technology

AI voice tools dramatically improve content accessibility for people with disabilities, expanding audience reach while supporting inclusivity.

Natural Reader

Natural Reader converts text to speech specifically for accessibility applications including dyslexia support, visual impairment assistance, and learning accommodations. The platform’s natural voices make extended listening comfortable.

For Voice Acting & Dubbing supporting accessibility, Natural Reader enables making any written content audibly accessible. Educational materials, websites, and documents become available to broader audiences through voice.

Speechify

Speechify specializes in making written content accessible through natural AI voice narration. The platform serves students with learning differences, busy professionals consuming content during commutes, and anyone preferring audio to reading.

The speed control enables accelerated listening up to 900 words per minute while maintaining comprehension through AI-optimized audio processing. For Voice Acting & Dubbing supporting efficient information consumption, this flexibility serves diverse user needs.

Voice Dream

Voice Dream delivers premium text-to-speech specifically designed for dyslexia, vision impairment, and reading differences. The high-quality voices and careful pacing support extended comfortable listening.

The document support includes PDFs, ebooks, web articles, and more, making diverse content accessible. For Voice Acting & Dubbing serving accessibility needs, comprehensive format support ensures nothing remains inaccessible.

10. Enterprise and API Solutions

Organizations requiring voice AI at scale benefit from enterprise platforms offering customization, control, and integration capabilities.

Amazon Polly

Amazon Polly provides text-to-speech as cloud service through AWS, offering neural voices with natural prosody and extensive language support. The service’s scalability handles enterprise-volume voice generation reliably.

For Voice Acting & Dubbing requiring integration with existing systems, Polly’s API enables embedding voice generation in applications, websites, and automated workflows. The pay-per-use pricing makes it cost-effective across usage levels.

The SSML support provides fine-grained control over pronunciation, emphasis, pacing, and prosody through markup language. This control enables directing AI performance precisely for specialized needs.

Google Cloud Text-to-Speech

Google Cloud TTS leverages DeepMind’s WaveNet technology for remarkably natural voice synthesis. The platform offers extensive language coverage and custom voice training for enterprise needs.

The voice tuning capabilities enable adjusting pitch, speed, and other parameters to create distinctive brand voices. For Voice Acting & Dubbing requiring brand consistency, these customizations ensure recognizable audio identity.

Google’s AutoML Custom Voice creates fully custom voice models trained on your audio data, enabling proprietary voices with unique characteristics unavailable in standard libraries.

Microsoft Azure Speech

Microsoft Azure Speech Services delivers enterprise text-to-speech with neural voices and custom voice capabilities. The platform integrates with Microsoft’s ecosystem while providing standalone API access.

For Voice Acting & Dubbing in enterprise environments, Azure’s security, compliance, and reliability features meet corporate requirements for sensitive content. The service handles healthcare, financial, and other regulated industries.

The Custom Neural Voice creates brand-specific voices trained on provided audio data, with professional voice talents available through Microsoft’s partnerships for organizations needing licensed custom voices.

Implementing AI Voice Acting & Dubbing Strategy

Successfully adopting AI voice tools requires thoughtful strategy considering quality, ethics, workflow integration, and audience expectations.

Start by identifying use cases where AI voice provides clear advantages—high-volume content, frequent revisions, multilingual needs, or budget constraints. Focus initial implementation where benefits are most obvious and measurable.

Establish quality standards determining when AI voices suffice versus when human voice actors remain necessary. Flagship content and emotionally critical moments may warrant human performance while routine content benefits from AI efficiency.

Address ethical considerations transparently. Disclose AI voice usage when appropriate, especially in contexts where audience might assume human performance. Ensure voice cloning has proper authorization from voice owners.

Integrate AI tools into existing workflows gradually rather than wholesale replacement. Many creators find hybrid approaches effective—human voice for primary content with AI for variations, updates, and translations.

Train team members thoroughly on AI voice tools and best practices. Effective AI voice direction differs from directing human actors, requiring understanding of tool-specific controls and limitations.

Monitor audience response to AI-voiced content. Track engagement metrics, gather feedback, and refine approaches based on actual reception rather than assumptions about AI voice acceptability.

The Future of Voice Acting & Dubbing

Voice Acting & Dubbing AI continues evolving rapidly. Emerging capabilities include real-time emotional adjustment during generation, perfect voice aging for flashback scenes or time-jump narratives, multi-speaker conversations with natural interruption and overlap patterns, and accent transformation while maintaining speaker identity.

The professional voice acting industry is adapting to AI reality. Forward-thinking performers license their voices for AI models, earning ongoing royalties while expanding their reach beyond physical recording capacity. Industry organizations are developing ethical frameworks and compensation models that benefit both AI advancement and voice actor livelihoods.

The future likely involves collaboration between AI and human talent rather than replacement. AI handles volume and variation while humans provide creative direction, emotional intelligence, and nuanced performances for critical moments.

Conclusion

The revolution in Voice Acting & Dubbing has transformed audio production from expensive, time-intensive processes into accessible, flexible content creation. The tools explored in this guide enable creators to produce professional voice content previously requiring prohibitive budgets and specialized expertise.

Success with AI voice tools requires balancing efficiency against quality, automation against human touch, and cost savings against ethical responsibility. Voice Acting & Dubbing AI delivers greatest value when strategically applied to appropriate use cases while maintaining quality standards and ethical practices.

The competitive advantages are compelling: dramatic cost reduction enabling previously impossible projects, production speed supporting rapid content iteration and updates, unlimited scalability without proportional cost increases, global accessibility through affordable multilingual production, and consistent quality across unlimited content volume.

Also read this:

Best AI Tools for Trend-Based Clothing Recommendations: Transform Fashion Retail in 2025

Best AI Tools for Virtual Fashion Try-On: Transform Your Shopping Experience in 2025

Top AI Tools for Ecommerce Image Generation: Revolutionize Your Online Store in 2025